Machine learning – and more specifically deep learning (DL) – is the contemporary AI darling of research organizations and AI conferences. While DL projects have shown remarkable progress the past several years in solving very specific tasks, and almost annually some new neural net architecture surfaces that appears to tackle bigger challenges more quickly and accurately, there are many tasks machine learning is inherently not well suited for. These tasks require conceptual comprehension; N-dimensional curve fitting or pattern matching by themselves just won’t get you there.

Conceptual comprehension

What do I mean by “conceptual comprehension?” Quite simply, I’m referring to the fact that humans generally reason over qualitative high-level concepts and intentions instead of focusing on quantitative values such as trajectories, velocities, brightness, etc ad nauseum. This can be seen in the definitions of the word concept (Oxford Languages and Google – English | Oxford Languages):

- an abstract idea; a general notion

- a plan or intention; a conception

- an idea or mental picture of a group or class of objects formed by combining all their aspects

Note the words used to describe a concept – abstract, notion, plan, idea, mental picture. Nowhere in there does a concept involve hard numbers, ranges, or fixed inputs. Our oversized neocortex provides us with the ability to reason over concepts and act on them, which is something that DL still struggles with. While impressive tools like AlphaGo and GPT-3 appear superhuman and highly intelligent, the fact remains that they are myopically focused tools that at best pattern match over tremendously large datasets, at worst generate an averaged response that optimizes an objective function selected at training – which by the definition of average is not superhuman at all. AlphaGo will never be able to play checkers, and GPT-3 cannot author a book for a specific genre without a significant level of human effort to keep them between the rails, or worse a complete redesign and/or retrain of the systems.

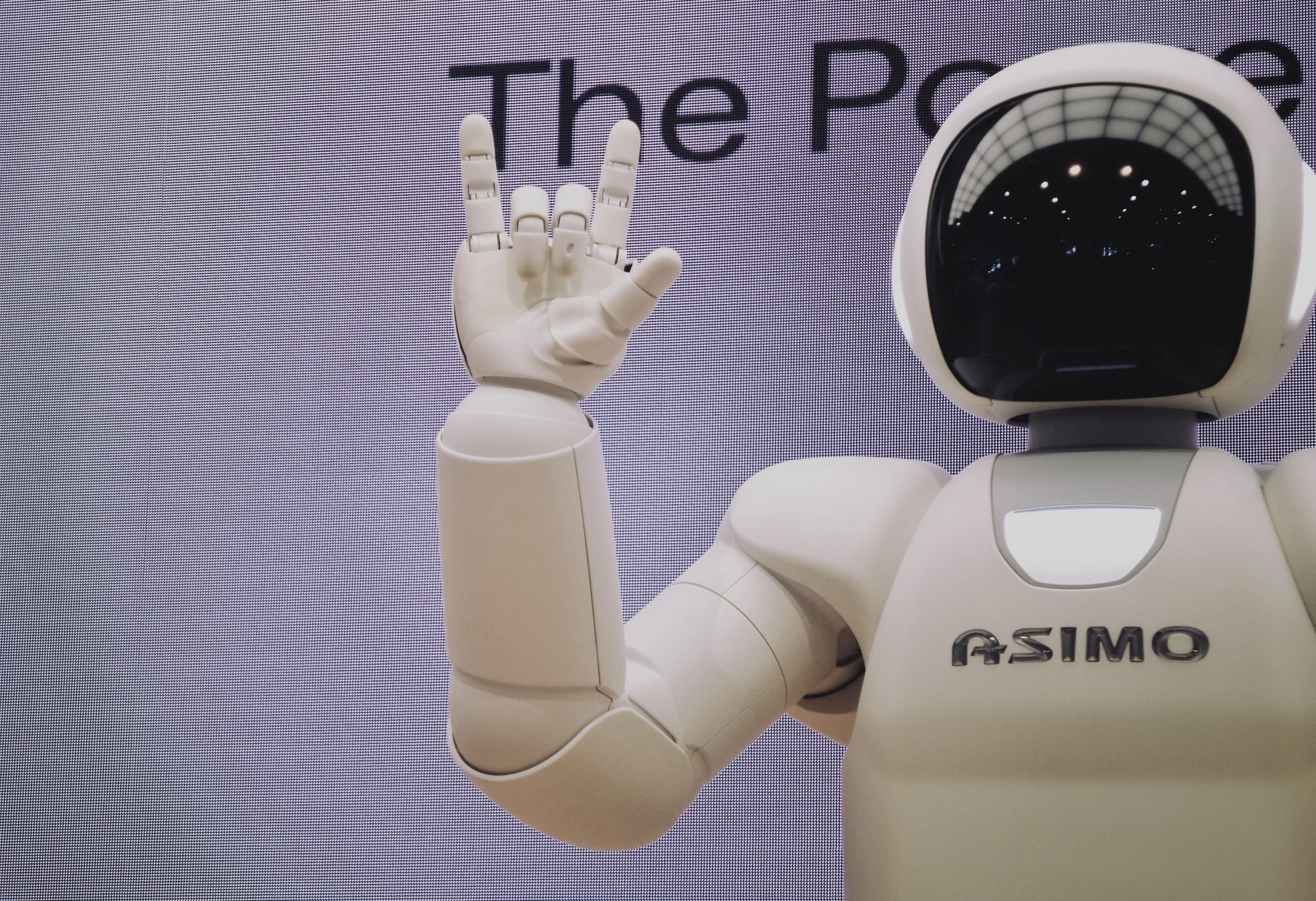

As an example – say I asked you to make a ham and cheese sandwich on rye – no problem, right? Certainly an AI agent driving a robot could be trained to do the same thing using computer vision (CV) to recognize the rye bread, ham, cheese, mustard, and knife to name a few key components. After millions (billions?) of iterations the agent can successfully assemble a sandwich. Call this one AlphaSandwich.

Now let’s make a hamburger… Same concept (bread, meat, toppings) but now AlphaSandwich’s CV model is broken since a bun looks a lot different than sliced rye, and slices of ham and a burger patty share no common shape or appearance. While we’re at it, we discover we’ve run out of all utensils except forks… AlphaSandwich has no idea that the ham and cheese sandwich is conceptually the same as a hamburger, nor that something not resembling a flat-ish utensil can conceptually be used to spread mustard (“AI and Robots Are a Minefield of Cognitive Biases”).

What about transfer learning or fine tuning?

Transfer learning and fine tuning are approaches to take a pre-trained, general-purpose DL model and repurpose it for a domain-specific use. Essentially the pre-trained model is frozen and used as the feed-forward front end to a domain-specific model, where the outputs of the pre-trained model are used as inputs to a trainable model that can leverage the generalized outputs for more specialized categories, regression problems, or transformations. While a step in the “conceptual” direction in the manner of taking a generalized approach and applying it to a specific need, the system still does not comprehend the domain concept; it is simply optimizing quantitative output for a set of inputs. The selection of an appropriate pre-trained model and how to map it into a domain-specific model still remains a conceptual task performed by a human researcher who comprehends the nuances.

What about multitask deep learning?

Multitask DL is the most recent trend in trying to tackle the requirement for multiple actions in response to a diverse mixture of stimuli (features if you will) (“How to Do Multi-Task Learning Intelligently”). In theory the model is trying to learn which actions need to be taken simultaneously when presented with a diverse set of features. At the end of the day, this is just throwing a bigger feature set and more complex model at a bigger challenge to reduce the number of overall models and datasets required for an ensemble or collaborative DL approach. No conceptual learning is taking place, and no true comprehension is occurring; the model is once again optimizing an objective function for what has been seen before, and is unable to predictably and reliably extrapolate to unseen conditions. This is not due to a lack of research, but simply a limitation to DL as it is commonly utilized today.

How can we better enable conceptual comprehension in AI systems?

One movement that came out of NeurIPS 2019 were several narratives regarding how to expand the capabilities of DL to generalize and automatically transfer knowledge between scenarios and/or domains without the “go big or go home,” mentality (2019 Conference). Some called for newer approaches to introduce meta-level architectures, others for leveraging collaborative efforts between smaller models in a hierarchical approach, and yet another strategy called for hybrid systems leveraging the best of symbolic AI and DL approaches. Here at Veloxiti we’ve been working with the latter approach for years to leverage ML where it makes sense, DL where it makes sense, and tying in human conceptual comprehension where the other mechanisms fall short. This provides a robust, transparent and explainable approach to creating intelligent agents for challenging domains.

One interesting offshoot that has also come out of the call for AI systems to be more conceptually aware is the “Common Sense AI” dataset where simply optimizing an objective functions simply isn’t good enough – you need to also demonstrate an understanding of the task at hand and how to weight actions using multiple criteria (Razzaq). The hope is that this kind of dataset will provide researchers with another tool to gauge the conceptual capabilities of their systems.

Conclusion

While deep learning is an incredibly powerful tool, it is also incredibly focused on what it can do. Well-designed and trained models can alleviate cognitive load for specific tasks, but by themselves are not able to recognize the larger patterns and generalize to unseen circumstances like humans can. Several approaches exist to address this challenge, but the general consensus is that no one model will ever exist to rule them all. Hybrid solutions appear to be the most promising approach, whether a new AI approach is developed to bridge the conceptual comprehension gap or existing non-DL approaches are leveraged to provide robust, transparent and explainable agents.

References

2019 Conference. https://neurips.cc/Conferences/2019. Accessed 26 July 2021.

“AI and Robots Are a Minefield of Cognitive Biases.” IEEE Spectrum: Technology, Engineering, and Science News, https://spectrum.ieee.org/automaton/robotics/robotics-software/humans-cognitive-biases-facing-ai. Accessed 26 July 2021.

“How to Do Multi-Task Learning Intelligently.” The Gradient, 22 June 2021, https://thegradient.pub/how-to-do-multi-task-learning-intelligently/.

Oxford Languages and Google – English | Oxford Languages. https://languages.oup.com/google-dictionary-en/. Accessed 26 July 2021.

Razzaq, Asif. “Researchers from IBM, MIT and Harvard Announced The Release Of DARPA ‘Common Sense AI’ Dataset Along With Two Machine Learning Models At ICML 2021.” MarkTechPost, 20 July 2021, https://www.marktechpost.com/2021/07/20/researchers-from-ibm-mit-and-harvard-announced-the-release-of-its-darpa-common-sense-ai-dataset-along-with-two-machine-learning-models-at-icml-2021/.